“Interpretability approaches” are designed to give insight into how machine-learning algorithms anticipate outcomes, but experts advise caution in their use.

About ten years ago, deep-learning models began to achieve superhuman outcomes on various tasks, from beating world-champion board game players to exceeding doctors in breast cancer diagnosis.

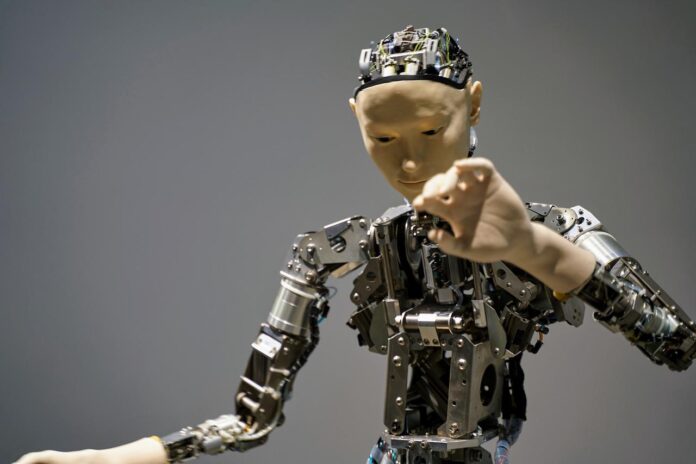

Artificial neural networks initially suggested in the 1940s and have since become a prominent kind of machine learning are used in these sophisticated deep-learning models. Neuronal networks, similar to those seen in the human brain, are operated by computers to learn how to handle data.

Machine learning has led to an increase in artificial neural networks.

The number of nodes in a deep-learning model may be in the millions or billions. They are trained to perform detection or classification tasks using massive quantities of data. However, even the experts who build the models do not entirely understand how they function since the models are incredibly complicated. It’s difficult to tell if they’re operating correctly because of this.

A model developed to assist doctors in diagnosing patients may have accurately predicted a skin lesion was malignant. Still, it may have done so by focusing on an irrelevant mark commonly occurring when there is diseased tissue in a picture rather than the cancerous tissue itself. This is referred to as a “false positive” connection. However, the model is correct in its forecast, but for the wrong reasons. Cancer detection may be missed if the mark is not present on cancer-positive pictures.

How can one decipher what’s happening within these so-called “black-box” models when there is so much ambiguity around them?

Due to this dilemma, research into how black-box machine learning models produce predictions has sprung up. It is now a burgeoning field of study that involves developing and testing explanation techniques (also known as interpretability methods).

What are the Methods for delivering an explanation?

To begin with, explanation techniques are either global or local at their most fundamental level. Local explanations focus on a single prediction, whereas global descriptions aim to characterise the model’s overall behaviour. Most of the time, this is accomplished by creating a smaller, more intelligible model that resembles the bigger, black-box model.

However, the complexity and nonlinearity of deep learning models make it difficult to create an effective global explanatory model. According to Yilun Zhou, an undergraduate student in the Interactive Robotics Group of the Computer Science and Artificial Intelligence Laboratory (CSAIL), this has caused researchers to shift their attention to local explanation approaches.

There are three major types of local explanation procedures in use today.

Feature attribution is the most common and extensively utilised way of explanation. For example, feature attribution techniques highlight which traits were most essential to the model when making one particular conclusion.

Input variables feed a machine learning model and are used in its prediction as features. The columns of a dataset are mined for components when the data is tabular (they are transformed using various techniques so the model can process the raw information). In contrast, every pixel in a picture is treated as a feature for image processing. With this approach, the essential pixels in the image would be highlighted. For example, an X-ray image that reveals cancer would be highlighted by this method.

As a rule of thumb, feature attribution methods reveal which aspects of a model are most important to the model when it predicts.

“Using this feature attribution explanation, you may examine to determine whether a false connection is an issue. For instance, it will display if the pixels in a watermark are highlighted or if the pixels in an entire tumour are highlighted,” adds Zhou.

A counterfactual explanation is another form of explanation strategy. These approaches demonstrate how to alter an input that belongs to a different category based on the model’s prediction. It may be possible to come up with a counterfactual explanation when a machine-learning algorithm predicts that a borrower would be turned down for a loan. Her credit score and income, utilised in the model’s projection, may need a boost to get accepted.

“The beautiful thing about this explanation technique is it shows you precisely how you need to adjust the input to flip the decision, which might have practical utility. For someone asking for a mortgage and didn’t receive it, this explanation would inform them what they need to do to obtain their desired outcome,” he adds.

Sample important explanations fall under the third type of explanation approach. Data needed to train a model must be made available for this procedure.

A sample importance explanation shows which training sample a model relied on most when producing a particular prediction; ideally, this sample is the sample most comparable to the input data set. If a forecast appears to be unreasonable, this form of explanation might be pretty helpful. A mistake in data entry may have impacted a specific sample used to train the model. It is possible to increase the model’s accuracy by fixing the example and using this information to introduce a new model version.

What methods of explanation are employed?

These explanations were developed as part of the model’s testing and quality assurance. For example, a better knowledge of how characteristics influence a model’s decision-making would allow one to notice that a model is operating wrongly and either intervene to repair the problem or throw the model away and start again.

In the second field of research, machine-learning models are being used to detect scientific patterns humans have never discovered. It’s possible to find hidden ways in an X-ray image that suggest early pathological pathways for cancer that were either unknown to human doctors or considered unimportant by clinicians, Zhou adds.

However, that field of study is still in its infancy.

Warnings and cautions:

Marzyeh Ghassemi, assistant professor and leader of CSAIL’s Healthy ML Group, advises end-users should employ explanation techniques with caution.

To assist decision-makers in better comprehending a model’s predictions and determine when to trust the model and employ its guidance in practice, explanation techniques are increasingly being used in fields such as health care and education where machine learning has been implemented. Ghassemi, on the other hand, cautions against misusing these techniques.

“We have observed that explanations make individuals, experts and nonexperts, overconfident in the capacity or the advice of a certain recommendation system. I think it is essential for people not to turn off that internal circuitry asking, ‘let me examine the counsel that I am given,'” she adds.

She adds that scientists know that explanations cause individuals to become overconfident, citing several recent studies by Microsoft researchers as evidence.

Methods of explanation are far from perfect and are rife with flaws. According to a recent study by Ghassemi, explanation approaches reinforce prejudice and contribute to lower outcomes for those from disadvantaged groups.

In addition, it is difficult to determine whether an explanation technique is proper in the first place, which is another flaw in explanation methods. Zhou argues that comparing the explanations to the model is circular logic since the user doesn’t know how the model works.

However, he and other researchers are working on refining the explanation techniques to be more accurate to the model’s predictions. Still, Zhou cautions that even the best explanation should be taken with a grain of salt.

“In addition, people often consider these models to be human-like decision-makers, and we are prone to overgeneralization. We need to calm people down and hold them back to truly make sure that the generalised model knowledge they create from these local explanations is balanced,” he says.

What’s next for machine-learning explanations?

According to Ghassemi, researchers need to devote more time and effort to studying how information is presented for decision-makers to understand it and to guarantee that machine-learning models are appropriately regulated in practice. Improvements in communication alone are not enough.

Many individuals in the industry realise that we can’t just take this data and construct a beautiful dashboard and think that people will perform better with it. I am hopeful this will lead to recommendations for changing how we show information in highly complex sectors, such as medicine,” she adds.

Additional research on explanation approaches for specific use cases, such as model debugging, scientific discovery, fairness audits, and safety assurance, will be undertaken, Zhou anticipates, in addition to work aimed at enhancing explanations. A theory based on fine-grained properties of explanation techniques and the needs of particular use cases might assist researchers in avoiding some of the difficulties that arise when utilising them in real-world situations.

Originally reported here https://news.mit.edu/2022/explained-how-tell-if-artificial-intelligence-working-way-we-want-0722